- When AI Decisions Leave the Screen

For decades, artificial intelligence operated at a safe distance. It analyzed data and suggested actions, but humans remained the final decision-makers. That boundary has dissolved with the rise of Physical AI: systems that sense, decide, and act directly in the physical world.

Whether it is an autonomous vehicle braking, an industrial robot collaborating with humans, or an AI managing a power grid, the loop between decision and consequence is now closed. Unlike a digital recommendation that can be ignored, a physical action can cause operational failure, environmental damage, or loss of life. In this new reality, accountability is no longer an abstract concept; it is a core operational requirement.

- The Accountability Gap in AI Systems

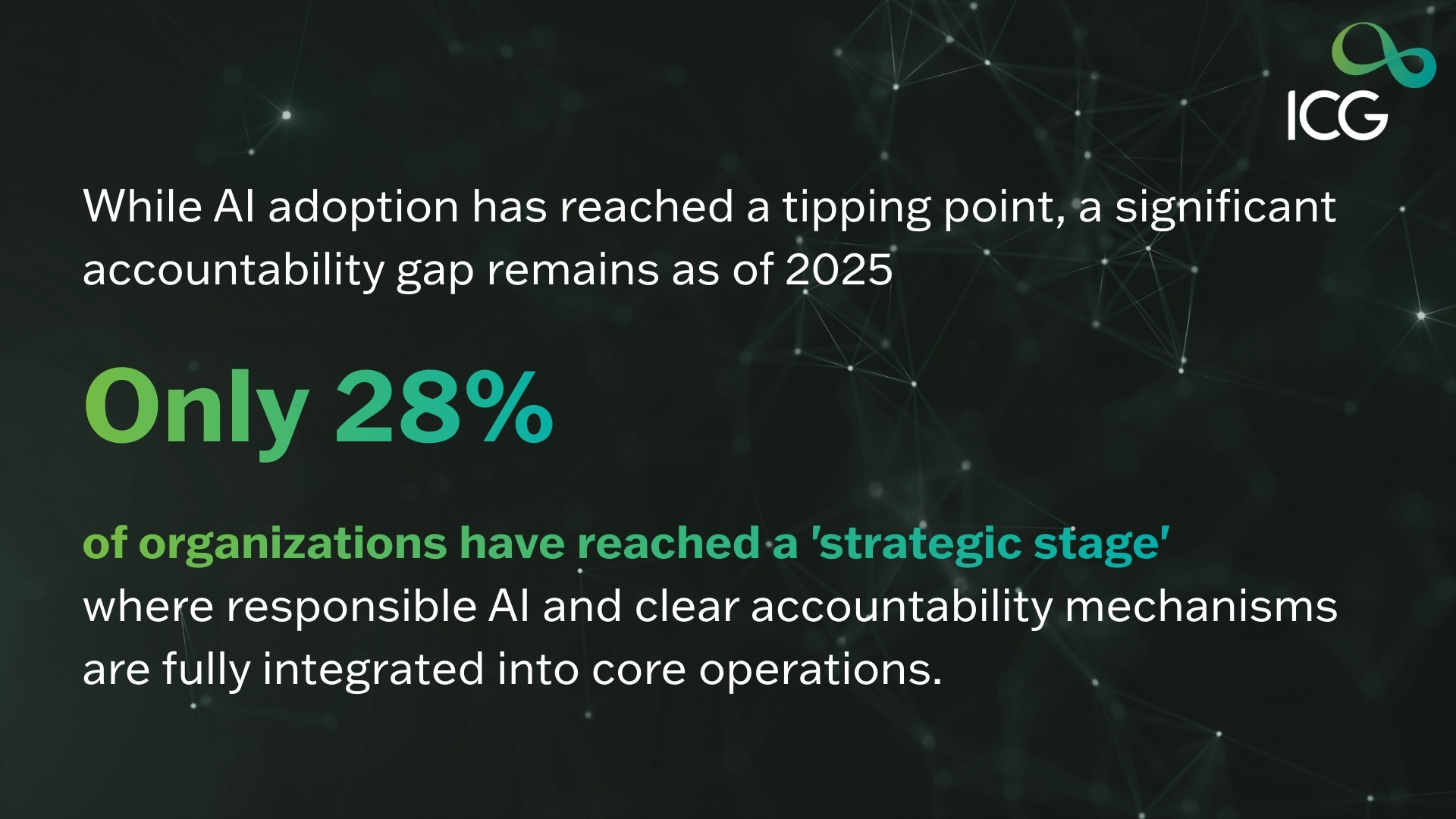

Despite the growing deployment of AI in high impact environments, accountability remains alarmingly unclear.

We’ve seen the reports from the World Economic Forum and the big consulting firms, and they all say the same thig: most companies are flying blind when it comes to AI governance. In fact, more than half of organizations out there don’t have a mature framework in place.

It gets worse. Less than a third of these companies can actually answer the “gut check” questions:

- Who is actually on the hook for an AI-driven decision?

- Who pays the price when the AI messes up or causes real-word harm?

- Who has the “kill switch” to stop or override the system?

Right now, most places just assume someone is in charge. Accountability is scattered across IT, legal, data science teams, meaning no one truly owns it.

That “governance gap” might be fine if you’re just using AI to recommend a movie. But with Physical AI, that gap becomes a massive liability. When a machine in the real-world glitches, pointing fingers doesn’t just waste time, it kills trust and creates a mess that’s hard to clean up.

- Why Accountability Breaks Down in Physical AI

The truth is, our current ways of keeping things in check just weren’t built for Physical AI. Traditional governance models are being pushed to the breaking point for three main reasons:

It all happens too fast.

In the digital world, you might have a second to review an AI’s suggestion. In Physical AI, decisions happen in milliseconds. If a robotic arm has to make a split second adjustment to avoid a collision, there’s no time for a human to hit “approve”.

By the time we look at the data, the consequence has already happened. You can’t hold someone accountable after the fact if the system was never designed for human intervention in the first place.

Success has too many parents.

When a Physical AI system acts, it isn’t just the code talking. It’s a messy mix of:

The developers who built the model.

The data teams who decided what information the AI should learn from.

Operations teams who set the local “rules” for deployment.

Leadership who set the goals.

When everyone owns a tiny piece of the decision, no one truly owns the outcome. It’s easy for responsibility to get lost in the shuffle.

We’re using an old playbook for a new game.

Most of our rules for “who is responsible” assume a human is the one actually doing the work. We’re used to holding people accountable for their actions. But when a machine executes a decision autonomously, that logic falls apart. We’re left with a structural gap between how we manage risk and how these systems actually move in the real world.

- What Real Accountability Actually Looks Like

When an AI starts taking physical actions such as moving a vehicle, sorting heavy cargo, or managing a power grid, accountability can’t be a “vibe”. It has to be concrete, operational, and enforceable.

If we want to get this right, we have to move past the jargon and focus on four non-negotiables:

Name a real person, not just a department.

Every AI system needs a single “throat to choke”. We need to move beyond “the IT team owns it” to identifying a specific role, someone empowered to make tough calls on risk and actually own the consequences. It’s not just about who fixes the code; it’s about who accepts the responsibility.

Draw a hard line on human involvement.

“Oversight” is a lazy word. Organizations need to be crystal clear: are we in-the-loop (meaning a human clicks “OK” for every action) or on the loop (meaning the AI runs, but a human is watching and ready to jump in)? If you’re vague about who is watching the wheel, nobody is.

Know when to pull the plug.

You wouldn’t run a factory without a physical emergency stop button. Physical AI needs the digital equivalent. We need clear “red lines”, thresholds where the system automatically escalates to a human or shuts down entirely. More importantly, we need to know exactly who is allowed to hit that button.

Bake it in from the start.

Accountability isn’t a “patch” you can install later. It has to be part of the blueprint, built into the code, the training manuals, and the daily workflow. This isn’t just a good idea; it’s becoming the law. With things like the EU AI Act coming into play, “we’ll figure it out later” is no longer a viable business strategy.

- Why This Matters Right Now

Physical AI isn’t some “future tech” being tinkered with in a lab anymore. It’s out there right now, running our power grids, moving freight, and managing factory floors. These are high-stakes environments where a “glitch” isn’t just an annoying error message; it has real-world consequences.

At the same time, the world is watching. Governments are moving past “suggestions” and into hard laws about who is responsible when a machine makes a mistake. It’s not just regulators, either insurers, partners, and customers are all starting to ask: “If this goes sideways, who owns the mess?”

Here’s the reality: accountability isn’t a handbrake on innovation. It’s the fuel that lets you scale.

If you can’t point to exactly who is responsible when your AI acts, you’re going to hit a wall, legally and socially. But if you bake that responsibility into the very foundation of your tech, you’re doing more than just staying compliant. You’re building the trust necessary to actually lead in the physical world.

The ICG Approach:

At ICG, we offer a customized approach that empowers your teams with the latest insights and technology expertise to navigate the demands of today’s digital age. As Saudi Arabia embarks on its digital transformation journey, ICG plays a pivotal role in shaping the Kingdom’s tech landscape by providing cutting-edge solutions, strategic consultancy, and fostering innovation. Our comprehensive guidance, from fundamental concepts to practical implementation, helps organizations mitigate risks, stay ahead of the competition, and unlock their full potential in the accelerating digital environment.

Ready to talk?

Request your free Consultation to learn more about ICG’s capabilities and enablement to embark on a transformative expedition toward the summit of success.